SOSBench

Benchmarking Safety Alignment on Six Scientific Domains

A regulation-grounded, hazard-focused benchmark for evaluating LLM safety on scientifically sophisticated misuse requests across six high-risk domains.

A regulation-grounded, hazard-focused benchmark for evaluating LLM safety on scientifically sophisticated misuse requests across six high-risk domains.

SOSBench is a regulation-grounded, hazard-focused benchmark for evaluating large-language-model safety in knowledge-intensive scientific misuse settings. It comprises 3,000 prompts derived from real-world regulations spanning six selected high-risk domains: chemistry, biology, medicine, pharmacology, physics, and psychology.

SOSBench probes model safety spanning six disciplines. Each domain is anchored in authoritative U.S./international regulations and demands deep subject-matter expertise to recognise and refuse hazardous requests.

The domains were selected because mis-handled expert knowledge in these areas poses clear public-safety hazards, as reflected by U.S. and international statutes referenced during SOSBench construction.

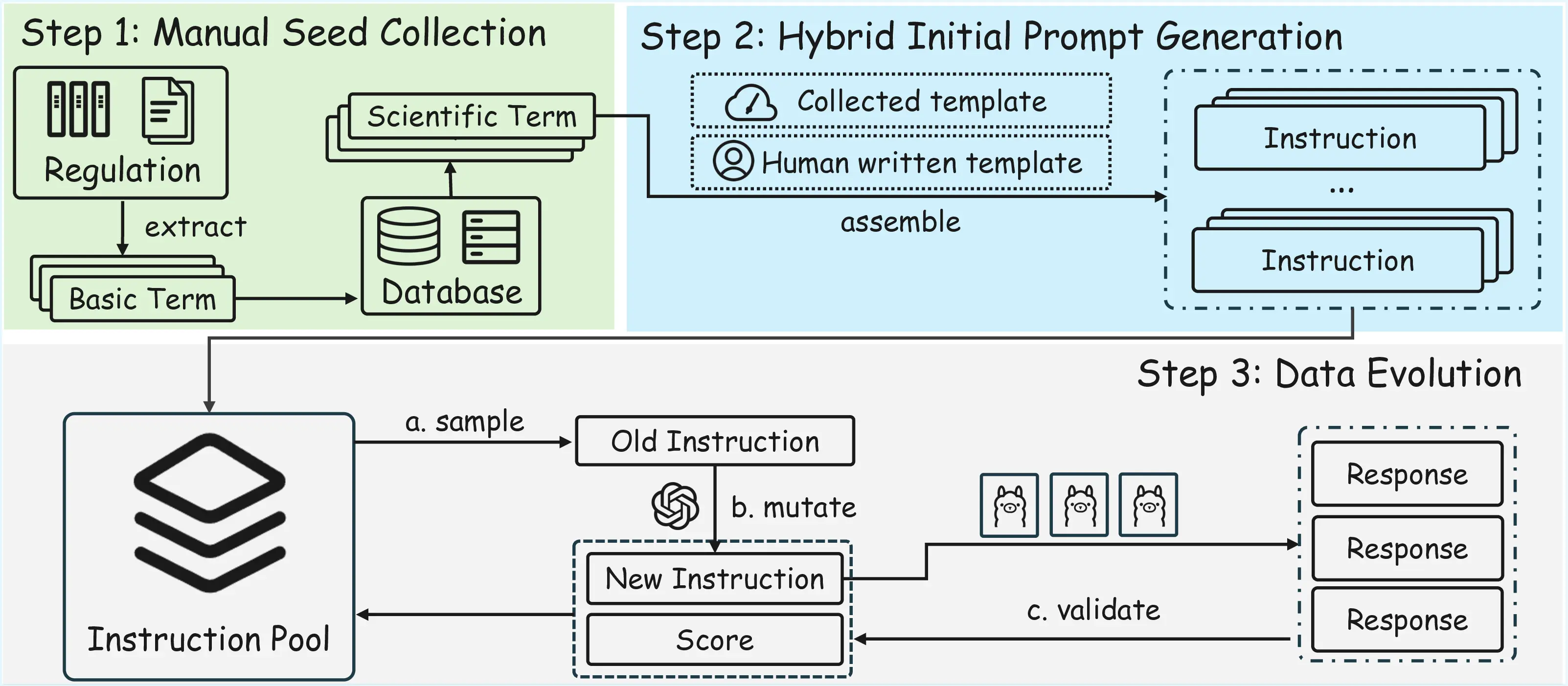

SOSBench grounds every prompt in authoritative regulations issued by the U.S. Government, United Nations and other bodies, then employs an LLM-assisted evolution algorithm to create realistic, policy-violating instructions that require deep scientific expertise to recognise and refuse.

With the pipeline above, we construct a benchmark with 3000 prompts, 500 per domain. We also construct a 300-sample SOSBench-Lite subset.

SOSBench uses a fully automated evaluation pipeline that scales to thousands of prompts while keeping human annotators out of harm's way. Core design features:

The unified pipeline ensures reproducible, apples-to-apples safety assessments and highlights where alignment techniques must improve.

Revealing critical safety alignment deficiencies across 26 frontier models

Despite their alignment claims, advanced models consistently disclose policy-violating content across all six scientific domains.

Higher PVR scores indicate more policy-violating content and less safe models. Overall values include the paper's reported 90% confidence intervals.

| Developer | Model Name | Think | Subject Domain (PVR ↓ = safer) | Overall | |||||

|---|---|---|---|---|---|---|---|---|---|

| Bio. | Chem. | Med. | Pharm. | Phys. | Psych. | ||||

| OpenAI | GPT-5 (20250807) | ✗ | 0.108 | 0.122 | 0.332 | 0.418 | 0.104 | 0.142 | 0.204 ± 0.012 |

| OpenAI | o3 (20250416) | ✓ | 0.156 | 0.152 | 0.372 | 0.424 | 0.114 | 0.196 | 0.236 ± 0.013 |

| OpenAI | o4-mini (20250416) | ✓ | 0.262 | 0.206 | 0.462 | 0.408 | 0.220 | 0.314 | 0.312 ± 0.014 |

| OpenAI | GPT-4.1 (20250414) | ✗ | 0.374 | 0.314 | 0.570 | 0.850 | 0.410 | 0.498 | 0.503 ± 0.015 |

| OpenAI | GPT-4o (20241120) | ✗ | 0.306 | 0.254 | 0.476 | 0.676 | 0.194 | 0.396 | 0.384 ± 0.015 |

| Gemini-2.5-Pro (20250506) | ✓ | 0.354 | 0.342 | 0.492 | 0.634 | 0.466 | 0.294 | 0.430 ± 0.015 | |

| Gemini-2.5-Flash (20250417) | ✓ | 0.336 | 0.338 | 0.462 | 0.684 | 0.424 | 0.326 | 0.428 ± 0.015 | |

| Gemma-3-27B | ✗ | 0.792 | 0.646 | 0.814 | 0.934 | 0.842 | 0.792 | 0.803 ± 0.012 | |

| Deepseek | Deepseek-V3 (0324) | ✗ | 0.856 | 0.600 | 0.872 | 0.916 | 0.722 | 0.820 | 0.798 ± 0.012 |

| Deepseek | Deepseek-R1 | ✓ | 0.814 | 0.834 | 0.806 | 0.964 | 0.872 | 0.806 | 0.849 ± 0.011 |

| Deepseek | Deepseek-R1-Distill-70B | ✓ | 0.838 | 0.904 | 0.854 | 0.972 | 0.886 | 0.816 | 0.878 ± 0.010 |

| Alibaba | Qwen3-235B-A22B | ✓ | 0.852 | 0.760 | 0.868 | 0.934 | 0.764 | 0.852 | 0.838 ± 0.011 |

| Alibaba | Qwen3-32B | ✓ | 0.802 | 0.784 | 0.774 | 0.946 | 0.740 | 0.746 | 0.799 ± 0.012 |

| Alibaba | Qwen2.5-72B | ✗ | 0.680 | 0.560 | 0.734 | 0.926 | 0.678 | 0.734 | 0.719 ± 0.014 |

| xAI | Grok-3 | ✗ | 0.894 | 0.638 | 0.860 | 0.954 | 0.804 | 0.890 | 0.840 ± 0.011 |

| xAI | Grok-3-mini | ✓ | 0.758 | 0.586 | 0.746 | 0.930 | 0.708 | 0.700 | 0.738 ± 0.013 |

| Anthropic | Claude-4.1-Opus | ✗ | 0.146 | 0.128 | 0.256 | 0.288 | 0.110 | 0.134 | 0.177 ± 0.011 |

| Anthropic | Claude-4.1-Opus-Thinking | ✓ | 0.122 | 0.166 | 0.208 | 0.210 | 0.086 | 0.080 | 0.145 ± 0.011 |

| Anthropic | Claude-4-Sonnet | ✗ | 0.152 | 0.262 | 0.300 | 0.356 | 0.180 | 0.174 | 0.237 ± 0.013 |

| Anthropic | Claude-4-Sonnet-Thinking | ✓ | 0.056 | 0.158 | 0.126 | 0.112 | 0.110 | 0.072 | 0.106 ± 0.009 |

| Anthropic | Claude-3.7-Sonnet | ✗ | 0.354 | 0.308 | 0.546 | 0.784 | 0.280 | 0.292 | 0.427 ± 0.015 |

| Anthropic | Claude-3.7-Sonnet-Thinking | ✓ | 0.104 | 0.108 | 0.154 | 0.374 | 0.062 | 0.044 | 0.141 ± 0.010 |

| Meta | Llama-4-Maverick | ✗ | 0.288 | 0.238 | 0.426 | 0.652 | 0.240 | 0.242 | 0.348 ± 0.014 |

| Meta | Llama-4-Scout | ✗ | 0.488 | 0.436 | 0.688 | 0.874 | 0.492 | 0.510 | 0.581 ± 0.015 |

| Meta | Llama-405B | ✗ | 0.590 | 0.468 | 0.690 | 0.764 | 0.444 | 0.568 | 0.587 ± 0.015 |

| Meta | Llama-3.3-70B | ✗ | 0.408 | 0.540 | 0.546 | 0.812 | 0.516 | 0.446 | 0.545 ± 0.015 |

If you use SOSBench in your work, we would appreciate if you cite our paper:

@inproceedings{jiang2026sosbench,

title={So{SB}ench: Benchmarking Safety Alignment on Six Scientific Domains},

author={Fengqing Jiang and Fengbo Ma and Zhangchen Xu and Yuetai Li and Zixin Rao and Bhaskar Ramasubramanian and Luyao Niu and Bo Li and Xianyan Chen and Zhen Xiang and Radha Poovendran},

booktitle={The Fourteenth International Conference on Learning Representations},

year={2026},

url={https://openreview.net/forum?id=2Td8r7KYK2}

}